Detector Technology

The best telescopes in the world are only as good as the technology used in detecting light from astronomical phenomena. Modern detector technology does far more than just take pretty pictures: it’s the way astronomers get any data about the stars, galaxies, and other bodies they study. Astronomical detectors use cutting-edge materials and electronics research to provide the best information possible to astronomers.

Our Work

Center for Astrophysics | Harvard & Smithsonian researchers engage in detector research, both in building instruments for observatories and developing new technologies:

-

The GMT-Consortium Large Earth Finder (G-CLEF) is a spectrograph designed and built at the CfA for use in the Giant Magellan Telescope (GMT) in Chile. When completed, this 22-meter (72-foot) telescope will be the largest visible-light telescope in the world. G-CLEF will enable the GMT to measure the masses of Earth-size exoplanets to great precision, and identify molecules in planetary atmospheres that could indicate life.

-

The MMT Observatory is the largest telescope at the CfA’s Fred Lawrence Whipple Observatory (FLWO) in Arizona. CfA engineers and scientists developed a suite of instruments for the MMT for imaging and analyzing the spectra of astronomical objects in optical and infrared light. These instruments make the MMT a powerful general-purpose telescope, and their design has been carried over for use in other observatories, including the Magellan Telescopes in Chile.

-

Solar astrophysics also requires building detectors for electrons, protons, ions, and other minuscule pieces of matter streaming from the Sun. CfA engineers provided the Solar Probe Cup (SPC) instrument for NASA’s Parker Solar Probe, a spacecraft designed to fly through the Sun’s outer atmosphere. SPC is part of the Solar Wind Electrons Alphas and Protons (SWEAP) instrument suite, which scoops electrically-charged particles directly from the Sun’s atmosphere for study.

-

CfA researchers are currently designing detectors for the next-generation X-ray observatory, Lynx. The instruments on this space telescope will provide astronomers with extraordinarily high-resolution views of the X-ray sky, including objects like black holes and galaxy clusters. Lynx has been proposed to NASA for consideration in the 2020 Decadal Survey, with a proposed launch date in the mid-2030s.

How We See

For thousands of years, the human eye was the only detector technology available. The earliest observational tools, including the first telescopes, relied on an astronomer to actually see the astronomical body. In the 19th century, astronomers began using photography, but also prisms and gratings to split light into its spectrum. Both methods opened new avenues for discovery beyond what the eye can see.

Twentieth century astronomers built telescopes capable of observing all types of light, from radio waves to gamma rays. To accommodate those new ways of seeing and to improve visible light telescopes, astronomers developed new detector technologies.

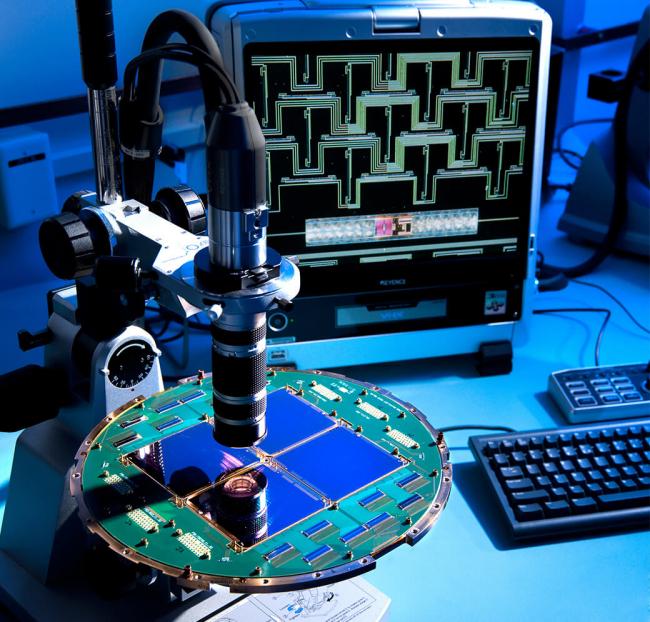

The BICEP2 telescope uses 512 extremely sensitive superconducting microwave detectors — the blue squares shown in the photo — to measure light from the cosmic microwave background.

Better Seeing Through Quantum Physics

One of the biggest advances in astronomy came from quantum physics. Charged-coupled devices (CCDs) are based on the fact that light is made up of individual particles called photons. As quantum physics describes, a photon can knock electrons out of a material, which is called the photoelectric effect. CCDs record the electrical signal produced by the photoelectric effect, allowing them to detect individual photons of certain wavelengths.

That ability makes CCDs far more sensitive than photography, so astronomers could detect fainter objects, see details in closer objects, and view more distant phenomena than ever before. In addition, CCDs can view the sky continuously, unlike film, which has to be changed once it has reached optimum exposure. Modern personal digital cameras use CCD technology, but filter light differently than observatories do.

As electronics get cheaper, some telescopes now use a slightly different type of detector, based on the same electronics used in computers: complementary metal–oxide–semiconductor (CMOS) detectors. These are less expensive than CCDs, use less energy, and are potentially better for higher-energy light such as X-rays. CMOS detectors also respond faster to light, which means they produce images more quickly. Researchers are developing detectors that reduce many of the trade-offs from using CMOS detectors, including some undesirable electronic noise.

Cold Light

In astronomy, CCDs are used across a wide spectrum, primarily visible, infrared, and ultraviolet light. While NASA’s Chandra X-ray Observatory also uses a type of CCD in one of its detectors, astronomers need other types of detectors for different observations.

One of these is a “bolometer”, which uses the fact that light carries energy. That energy warms up a material it strikes. Bolometers used in precision microwave and radio detectors are kept at super-cold temperatures, where the material is a superconductor: electricity flows without resistance. When a photon strikes, it warms the superconductor just enough for it to transition to a normal material.

Like CCDs, bolometers can detect single photons. They’re most useful when astronomers need to measure light from low energy sources, which produce photons too weak to trigger the photoelectric effect. Bolometers are used in microwave observatories such as the South Pole Telescope (SPT), which measures the cosmic microwave background, light from when the universe was less than a half a million years old.

- Cosmology

- Laboratory Astrophysics

- Planetary Systems

- Solar & Heliospheric Physics

- The Milky Way Galaxy

- Extragalactic Astronomy

- Instrumentation

- Stellar Astronomy

Related News

CfA Selects Contractor for Next Generation Event Horizon Telescope Antennas

Can Cosmic Inflation be Ruled Out?

Astronomers May Have Discovered the First Planet Outside of Our Galaxy

Gravitational Self-Lensing of Massive Black Hole Binaries

Latest Results from Cosmic Microwave Background Measurements

"X-Ray Magnifying Glass" Enhances View of Distant Black Holes

Projects

AstroAI

GMACS

For Scientists

Telescopes and Instruments

SOFIA (Stratospheric Observatory for Infrared Astronomy)

Visit the SOFIA Website

The Submillimeter Array - Maunakea, HI

Visit the Submillimeter Array Website